As I continue exploring AI, I’m realizing just how much I want to see what all the rage is about agents. Problem is, I don’t just want to turn an agent loose — I have trust issues! There are also a bunch of things I’m just plain interested in learning about how all this agentic AI actually works under the hood. I’m a hacker/tinkerer, so the solution is obvious, right?

I’m building my own agentic AI suite.

Goals

- Learn more about the nuts-and-bolts of tuning an LLM for responses

- Experiment with memory, a knowledge base, and Retrieval-Augmented Generation (RAG)

- Tinker with a skills framework

- Push what I can do with various partially-autonomous agents, sub-agents, and capabilities

- All together, build myself a “Really Smart Journal” — this is my personal AI agent, tuned to my personality and biography and goals, generating feedback that I need and want.

Of course, I’m doing this as another round of AI-driven coding, to put some of what I’ve learned with plan-review-iterate-retro cycles to use.

Architecture

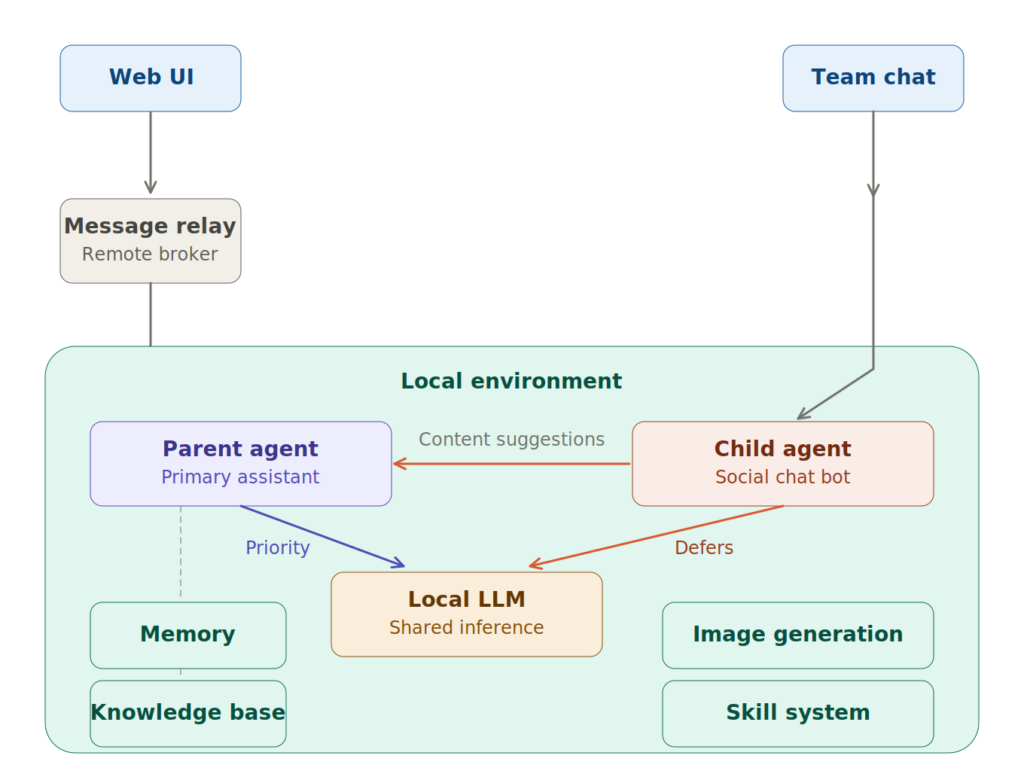

To oversimplify, this agent framework is built as:

- An on-prem server running all of the AI infrastructure

- LLM

- Memory and knowledge

- Skills

- Main agent

- Child agents

- A front end web site

- To avoid reverse-proxy tunnel voodoo, I decided to accept slower responsiveness and use a broker with active polling

- Other integrations as they come up

- Image generation (through the AI server)

- Chat integration (think Slack, Discord, etc)

A key feature not depicted here is a multi-tiered authorization and auditing governance layer. I have trust issues, so even though I’m slowly granting the system autonomic abilities, I need to be in the loop — there are many things the system is not allowed to do, or needs explicit permission for, and all of that needs to be logged and auditable.

How it’s Going

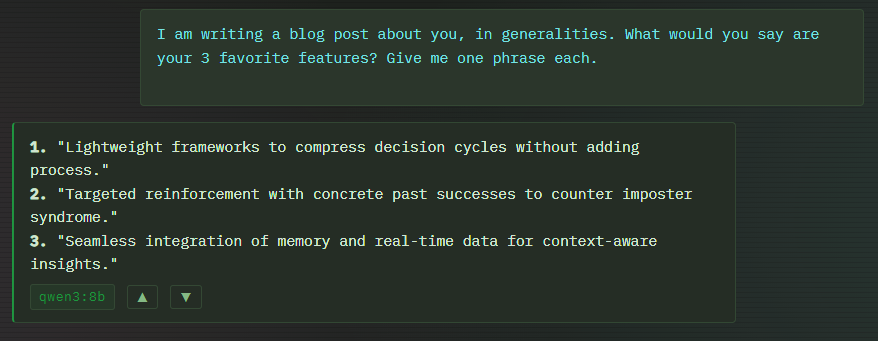

The system as diagrammed above is up and running! I’m using it on a daily basis now, to get a feel for the system prompt, behaviors, and how the RAG performs over time. I have a ton of future plans: knowledge base health and updating, and I want to really push forward (carefully) with granting more autonomy. For now, I seem to have gotten it to stop lying about capabilities it doesn’t have (I’ll probably do a whole post on Grounding someday), and I’m finding it pleasant to use regularly. Even if it DOES, as this screenshot shows, like to reveal some of my coaching needs…